As many of you know, I’ve taken a stand with many other storage professionals to try to educate the industry that peak performance is vastly different to real world performance. I covered this in a post titled: Peak Performance vs Real World Performance.

I have also given a specific example of Peak Performance vs Real World Performance with a Business Critical Application (MS Exchange) where I demonstrate that the first and most significant constraining factor for Exchange performance is compute (CPU/RAM) so achieving more IOPS is unnecessary to achieve the business outcome (which is supporting a given number of Exchange mailboxes/message per day).

However vendors (all of them) who offer products which provide storage, whether it is as a component such as in HCI or a fully focused offering, continue to promote peak performance numbers. They do this because the industry as a whole has and continues to promote these numbers as if they are relevant and trying to one-up each other with nonsense comparisons.

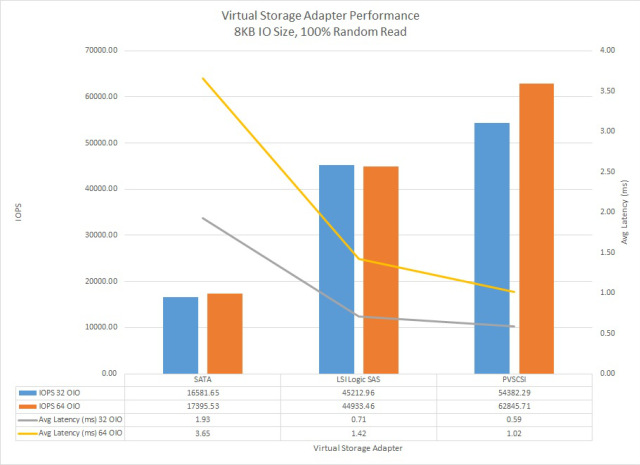

VMware and the EMC federation have made a lot of noise around In-Kernel being better performance than Software Defined Storage running within a VM which is referred to by some as a VSA (Virtual Storage Appliance). At the same time the same companies/people are recommending business critical applications (vBCA) be virtualized. This is a clear contradiction, as I explain in an article I wrote titled In-Kernel verses Virtual Storage Appliance which in short concludes by saying:

…a high performance (1M+ IOPS) solution can be delivered both In-Kernel or via a VSA, it’s simple as that. We are long past the days where a VM was a significant bottleneck (circa 2004 w/ ESX 2.x).

I stand by this statement and the in-kernel vs VSA debate is another example of nonsense comparisons which have little/no relevance in the real world. I will now (reluctantly) cover off (quickly) some marketing numbers before getting to the point of this post.

VMware VSAN 6.2

Firstly, Congratulations to VMware on this release. I believe you now have a minimally viable product thanks to the introduction of software based checksums which are essential for any storage platform.

VMW Claim One: For the VSAN 6.2 release, “delivering over 6M IOPS with an all-flash architecture”

The basic math for a 64 node cluster = ~93700 IOPS / node but as I have seen this benchmark from Intel showing 6.7Million IOPS for a 64 node cluster, let’s give VMware the benefit of the doubt and assume its an even 7M IOPS which equates to 109375 IOPS / node.

Reference: VMware Virtual SAN Datasheet

VMW Claim Two: Highest Performance >100K IOPS per node

The graphic below (pulled directly from VMware’s website) shows their performance claims of >100K IOPS per node and >6 Million IOPS per cluster.

Reference: Introducing you to the 4th Generation Virtual SAN

Now what about Nutanix Distributed Storage Fabric (NDSF) & Acropolis Operating System (AOS) 4.6?

We’re now at the point where the hardware is becoming the bottleneck as we are saturating the performance of physical Intel S3700 enterprise-grade solid state drives (SSDs) on many of our hybrid nodes. As such we have moved onto performance testing of our NX-9460-G4 model which has 4 nodes running Haswell CPUs and 6 x Intel S3700 SSDs per node all in 2RU.

With AOS 4.6 running ESXi 6.0 on a NX9460-G4 (4 x NX-9040-G4 nodes), Nutanix are seeing in excess of 150K IOPS per node, which is 600K IOPS per 2RU (Nutanix Block).

The below graph shows performance per node and how the solution scales in terms of performance up to a 4 node / 1 block solution which fits within 2RU.

So Nutanix AOS 4.6 provides approx. 36% higher performance than VSAN 6.2.

(>150K IOPS per NX9040-G4 node compared to <=110K IOPS for All Flash VSAN 6.2 node)

It should be noted the above Nutanix performance numbers have already been improved upon in upcoming releases going through performance engineering and QA, so this is far from the best you will see.

Enough with the nonsense marketing numbers! Let’s get to the point of the post:

These 4k 100% random read IOPS (and similar) tests are totally unrealistic.

Assuming the 4k IOPS tests were realistic, to quote my previous article:

Peak performance is rarely a significant factor for a storage solution.

More importantly, SO WHAT if Vendor A (in this case Nutanix) has higher peak performance than Vendor B (in this case VSAN)!

What matters is customer business outcomes, not benchmark(eting)!

Wait a minute, the vendor with the higher performance is telling you peak performance doesn’t matter instead of bragging about it and trying to make it sound importaint?

Yes you are reading that correctly, no one should care who has the highest unrealistic benchmark!

I wrote things to consider when choosing infrastructure. a while back to highlight that choosing the “Best of Breed” for every workload may not be a good overall strategy, as it will require management of multiple silos which leads to inefficiency and increased costs.

The key point is if you can meet all the customer requirements (e.g.: performance) with a standard platform while working within constraints such as budget, power, cooling, rack space and time to value, you’re doing yourself (or your customer) a dis-service by not considering using a standard platform for your workloads. So if Vendor X has 10% faster performance (even for your specific workload) than Vendor Y but Vendor Y still meets your requirements, performance shouldn’t be a significant consideration when choosing a product.

Both VSAN and Nutanix are software defined storage and I expect both will continue to rapidly improve performance through tuning done completely in software. If we were talking about a product which is dependant on offloading to Hardware, then sure performance comparisons will be relevant for longer, but VSAN and Nutanix are both 100% software and can/do improve performance in software with every release.

In 3 months, VSAN might be slightly faster. Then 3 months later Nutanix will overtake them again. In reality, peak performance rarely if ever impacts real world customer deployments and with scale out solutions, it’s even less relevant as you can scale.

If a solution can’t scale, or does so in 2 node mirror type configurations then considering peak performance is much more critical. I’d suggest if you’re looking at this (legacy) style of product you have bigger issues.

Not only does performance in the software defined storage world change rapidly, so does the performance of the underlying commodity hardware, such as CPUs and SSDs. This is why its importaint to consider products (like VSAN and Nutanix) that are not dependant on proprietary hardware as hardware eventually becomes a constraint. This is why the world is moving towards software defined for storage, networking etc.

If more performance is required, the ability to add new nodes and the ability to form a heterogeneous cluster and distribute data evenly across the cluster (like NDSF does) is vastly more importaint than the peak IOPS difference between two products.

While you might think that this blog post is a direct attack on HCI vendors, the principle analogy holds true for any hardware or storage vendor out there. It is only a matter of time before customers stop getting trapped in benchmark(et)ing wars. They will instead identify their real requirements and readily embrace the overall value of dramatically simple on-premises infrastructure.

In my opinion, Nutanix is miles ahead of the competition in terms of value, flexibility, operational benefits, product maturity and market-leading customer service all of which matter way more than peak performance (which Nutanix is the fastest anyway).

Summary:

- Focus on what matters and determine whether or not a solution delivers the required business outcomes. Hint: This is rarely just a matter of MOAR IOPS!

- Don’t waste your time in benchmark(et)ing wars or proof of concept bake offs.

- Nutanix AOS 4.6 outperforms VSAN 6.2

- A VSA can outperform an in-kernel SDS product, so lets put that in-kernel vs VSA nonsense to rest.

- Peak performance benchmarks still don’t matter even when the vendor I work for has the highest performance. (a.k.a My opinion doesn’t change based on my employers current product capabilities)

- Storage vendors ALL should stop with the peak IOPS nonsense marketing.

- Software-defined storage products like Nutanix and VSAN continue to rapidly improve performance, so comparisons are outdated soon after publication.

- Products dependant upon propitiatory hardware are not the future

- Put a high focus on the quality of vendors support.

Related Articles:

- Peak Performance vs Real World Performance

- Peak performance vs Real World – Exchange on Nutanix Acropolis Hypervisor (AHV)

- The Key to performance is Consistency

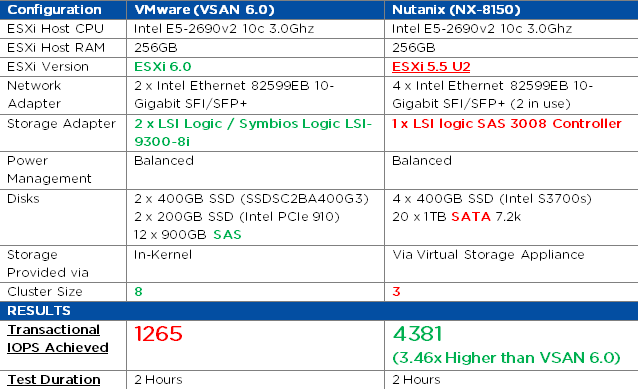

- MS Exchange Performance – Nutanix vs VSAN 6.0

- Scaling to 1 Million IOPS and beyond linearly!

- Things to consider when choosing infrastructure.