Following on from Part 1 of the Scalability series where we discussed how Nutanix can scale storage capacity seperate to compute, the next obvious topic is to talk about scaling CPU and Memory resources at both the workload and cluster level.

Let’s first recap the problems with scaling compute with traditional shared storage.

Yuk! That looks like old school 3-tier stuff to me!

Non HCI workloads on compute only nodes would therefore:

- Be running in the same setup as traditional 3-tier infrastructure

- Have different performance than HCI based workloads

- Loose the advantage of having compute + storage close together

- Increase dependency on Network

- Impact network utilization of HCI node/s

- Impact benefits of HCI for the native HCI workloads and much more.

The industry has accepted HCI as they way of the future and while adding compute only nodes might sound nice at a high level, its just re-introducing the classic 3-tier complexity and problems of the past when if we review the actual requirements it’s very rare to see a Nutanix node have insufficient resources when sized/configured correctly.

Customers are often surprised when they show me their workloads and I don’t seem surprised by the CPU/RAM or storage IO or capacity requirements. I can’t tell you how many times I’ve made statements like “You’re applications requirements are not that high, I’ve seen much worse!”.

Examples of scaling compute with Nutanix

Example 1: Scaling up a Virtual Machines compute resources:

SQL/Oracle DBA: Our application is growing/running slowly, We need more CPU/RAM!

Nutanix: You have several options:

a) Scale up the virtual machine’s vCPUs and vRAM to match the size of the NUMA node.

b) Scale up the virtual machine’s vCPUs and vRAM to be the same number of pCore’s as the host minus the Nutanix CVM vCPUs and do the same with the RAM.

The first option is the optimal as it will ensure maximum memory performance as the CPU will be assessing memory within the NUMA boundary, however the second option is still viable and for applications such as SQL, the impact of insufficient memory can be higher than the penalty of crossing a NUMA boundary.

BUT MY WORKLOAD IS UNIQUE, IT NEEDS A PHYSICAL SERVER!!

Despite hearing these type of statements by prospective and existing customers, Very few workloads actually need more CPU/RAM that a modern Nutanix (or OEM/Software only) node can provide even if you remove resources for the Controller VM (CVM). I find that it’s usually only a perceived requirement for physical servers and in reality, a reasonably sized VM on a standard node will deliver the desired business outcome/s comfortably.

Currently Nutanix NX nodes support Intel Platinum 8180 processors which have 28 physical cores @ 2.5 GHz per socket for a total of 56 physical cores (112 threads).

If you had say an existing physical server using a fairly modern Intel Broadwell E5-2699 v4 with dual 22 physical core processors, you have a total SPECint_rate of 1760 or 40 per core.

Compare that to the Intel Platinum 8180 processor and you have a total SPECint_rate of 2720 or 48.5 per core.

This is an increase per core of 21.25%.

So if you’re moving from that physical server using Intel Broadwell E5-2699 v4 CPUs (44 cores) and you move that workload to Nutanix with ZERO CPU overcommitment (vCPU:pCore ratio 1:1) using the Intel Platinum 8180 processor, assuming we reserve 8 pCores for the CVM we still have 48 pCores for the SQL VM.

That’s a SpecIntRate of 2328 which is higher than the physical server using all cores.

That’s over 32% more CPU performance for the Virtual Machine compared to the dedicated physical server.

The reality is the Nutanix CVM and Acropolis Distributed Storage Fabric (ADSF) provides high performance, low latency storage which also drives further CPU efficiency by eliminating CPU WAIT (CPU cycles wasted waiting for I/O to complete).

As you can see from this simple example, a Virtual Machine on Nutanix can easily replace even a modern physical server and even provide better performance with only one generation newer CPU. Think about how your 3-5 year old physical servers will feel when they jump multiple generations of CPU and get scale out flash based storage.

Example 2: A VM (genuinely) needs more CPU/RAM than Nutanix nodes have.

SQL/Oracle DBA: Our application/s is needs more CPU/RAM than our biggest node/s can provide.

Nutanix: You have several options:

a) Purchase one or more larger node (e.g.: NX-8035-G6 w/ Intel Platinum or Gold Processors, add them to the existing cluster and live migrate your VM/s to that/those nodes. Use affinity rules to keep critical VMs on the highest performance nodes.

Nutanix supports mixing different hardware types/generations in the same cluster and this can be a preferred option over creating a dedicated cluster for several reasons.

- Larger clusters provide more targets for replication traffic (i.e.: RF2 or RF3) meaning lower average write latency

- Larger clusters provide higher resiliency as they can potentially tolerate more failures and rebuild follow a drive/node or nodes failing faster.

- Larger clusters help ensure the impact of a failure is lower as a lower percentage of cluster resources are lost

b) Purchase one or more larger node (e.g.: NX-8035-G6 w/ Intel Platinum or Gold Processors, and create a new cluster and migrate your VM/s to that cluster.

A dedicated cluster may sound attractive, but in most cases I recommend mix workload clusters as they ultimately provide higher performance, resiliency and flexibility.

c) Scale out your workloads

Applications like MS Exchange, MS SQL and Oracle RAC can (and arguably should) be scaled out rather than scaled up as doing so provides increased performance, resiliency and reduces overall infrastructure costs (e.g.: More cheaper/smaller processors can be used as opposed to premium processors like Intel Platinum series).

One large VM hosting dozens of databases is rarely a good idea, so scale out and run more VMs, distributed across your Nutanix cluster and spread the workload across all the VMs.

For 99% of workloads, I do not see the real world value of compute only nodes. But there are always exceptions to every rule.

Potential Exceptions:

Example 3: Re-using existing hardware

SQL DBA: I love my Nutanix gear (duh!) but I have some physical servers which wont be end of life for 12 months, can I continue using them with Nutanix?

Nutanix: We have several options:

a) If the hardware is on our Software-only hardware compatibility list (HCL), it’s possible you can purchase SW-only licenses and deploy Nutanix on your existing hardware.

b) Use Nutanix Acropolis Block Services (ABS) to provide highly available scale out storage to your physical server via iSCSI.

ABS was released in 2015 and supports SCSI-3 persistent reservations for shared storage-based Windows clusters, which are commonly used with Microsoft SQL Server and clustered file servers.

ABS supports several use cases, including:

- iSCSI for Microsoft Exchange Server.

- Shared storage for Linux-based clusters

- Windows Server Failover Clustering (WSFC).

- SCSI-3 persistent reservations for shared storage-based Windows clusters

- Shared storage for Oracle RAC environments.

- Bare-metal environments.

Therefore ABS allows you to re-use your existing hardware to maximise your return on investment (ROI) while getting the benefits of ADSF. Once the hardware is end of life, the storage already on the Nutanix cluster can be quickly presented to a VM so the workload will benefit from the full Nutanix HCI experience.

Future Capabilities:

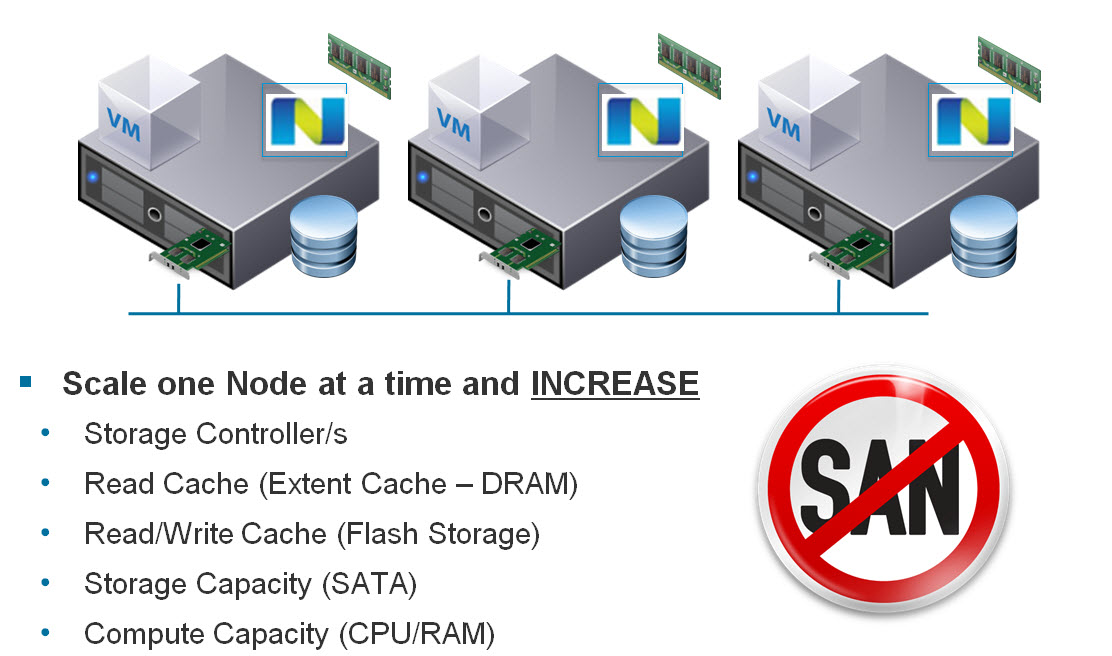

In late 2017, Nutanix announced Nutanix Acropolis Compute Cloud (AC2) which will provide the ability to have true compute-only nodes in a Nutanix cluster as shown below.

I reluctantly mention this upcoming capability because I do not want to see customers go back to a 3-teir model or think that HCI isn’t the way forward because it is. That’s not what compute-only is about.

This capability is specifically designed to work around the niche circumstances where a software vendor such as Oracle, are extorting customers from a licensing perspective and it’s desirable to maximise the CPU cores the application can use.

Let me have a quick rant and put an end to the nonsense before it gets out of hand:

IT IS NOT FOR GENERAL VM USE!

NO ITS NOT FOR PERFORMANCE REASONS.

NO NUTANIX IS NOT MOVING BACK TO A 3-TIER COMPUTE+STORAGE MODEL.

HCI WITH NUTANIX IS STILL THE WAY FORWARD

Summary:

Nutanix provides excellent scalability at the CPU/RAM level for both virtual and physical workloads. In rare circumstances where physical servers are a real (or likely just a perceived) requirement, ABS can be used while Nutanix will soon also provide Compute-only for AHV customers to ensure licensing value is maximised for those rare cases.

Back to the Scalability, Resiliency and Performance Index.