Problem Statement

What is the most suitable DRS automation level and migration threshold for a vSphere cluster running on Nutanix?

Requirements

1. Ensure optimal performance for Business Critical Applications

2. Minimize complexity where possible

Assumptions

1. Workload types and size are unpredictable and workloads may vary greatly and without notice

2. The solution needs to be as automated as possible without introducing significant risk

3. vSphere 5.0 or later

Constraints

1. 2 x 10GB NICs per ESXi host (Nutanix node)

Motivation

1. Prevent unnecessary vMotion migrations which will impact host & cluster performance

2. Ensure the cluster standard deviation is minimal

3. Reduce administrative overhead of reviewing and approving DRS recommendations

4. Ensure optimal storage performance

Architectural Decision

Use DRS in Fully Automated mode with setting “3” – Apply priority 1,2 and 3 recommendations

Create a DRS “Should run on hosts in group” rule for each Business Critical Applications (BCAs) and configure each BCA to run on a single specified host (ensuring BCA’s are separated or grouped according to workload)

DRS Automation will be Disabled for all Controller VMs (CVMs)

Justification

1. Fully Automated DRS prevents excessive vMotion migrations that do not provide significant compute benefits to cluster balance as the vMotion itself will use cluster & network resources

2. Ensure the Nutanix Distributed File System , specifically the “Curator” component does not need to frequently relocate data between Nutanix nodes (ESXi hosts) direct attached storage to ensure virtual machine/s have local access to data. Doing so would put additional load on the Controller VM (and Curator service), local/remote storage and the network.

2. Ensure cluster remains in a reasonably load balanced state without resource being wasted on load balancing the compute layer to only achieve minimal improvement which may impact the storage/network layer/s.

3. Applying Level 1,2 and 3 recommendations means recommendations that must be followed to satisfy cluster constraints, such as affinity rules and host maintenance will be applied (Level 1) as well as applying recommendations with four or more stars (Level 2) that promise a significant improvement in the cluster’s load balance. In the event significant improvement to the clusters load balance will be achieved, movement of data at the storage layer (via the CVM / Network) can be justified

3. DRS is a low risk, proven technology which has been used in large production environments for many years

4. Setting DRS to manual would be a significant administrative (BAU) overhead and introduce additional risks such as human error and situations where contention may go unnoticed which may impact performance of one or more VMs

5. Setting a more aggressive DRS migration threshold may put an additional load on the cluster which will likely not result in significantly better cluster balance (or VM performance) and could result in significant additional workload for the ESXi hosts (compute layer), the Nutanix Controller VM (CVM) ,network & underlying storage.

6. By using DRS “Should run on this host” rules for Business Critical Applications (BCAs) will ensure consistent performance for these workloads (by keeping VMs on the same ESXi host/Nutanix node where its data is local) without introducing significant complexity or limiting vSphere functionally

Implications

1. In some circumstances the DRS cluster may have a low level of imbalance

2. DRS will not move workloads via vMotion where only a moderate improvement to the cluster will be achieved

3. At times, including after performing updates (via VUM) of ESXi hosts (Nutanix Nodes) the cluster may appear to be unevenly balanced as DRS may calculate minimal benefit from migrations. Setting DRS to “Use Fully automated and Migration threshold 3” for a short period of time following maintenance should result in a more evenly balanced DRS cluster with minimal (short term) increased workload for the Nutanix Controller VM (CVM) , network & underlying storage.

4. DRS rules will need to be created for Business Critical Applications

Alternatives

1.Use Fully automated and Migration threshold 1 – Apply priority 1 recommendations

2.Use Fully automated and Migration threshold 3 – Apply priority 1,2 recommendations

3. Use Fully automated and Migration threshold 4- Apply priority 1,2,3 and 4 recommendations

4.Use Fully automated and Migration threshold 5- Apply priority 1,2,3,4 & 5 recommendations

5. Set DRS to manual and have a VMware administrator assess and apply recommendations

6. Set DRS to “Partially automated”

Related Articles

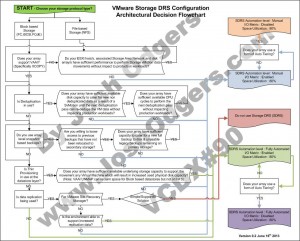

1. Storage DRS and Nutanix – To use or not to use, That is the question