In the first part of this series, I introduced Nutanix X-Ray benchmarking tool which has been designed very differently to traditional benchmarking tools as the performance of the app is the control and the variable is the platform,not the other way around.

In the second part, I showed how Nutanix AHV & AOS could maintain the performance while utilising snapshots to achieve the type of recovery point objective (RPO) that is expected in production environments, especially with business critical workloads whereas a leading hypervisor and SDS platform could not.

In this part, I will cover the Extended Node Failure Scenario in X-Ray and again compare Nutanix AOS/AHV and a leading hypervisor and SDS platform in another real world scenario.

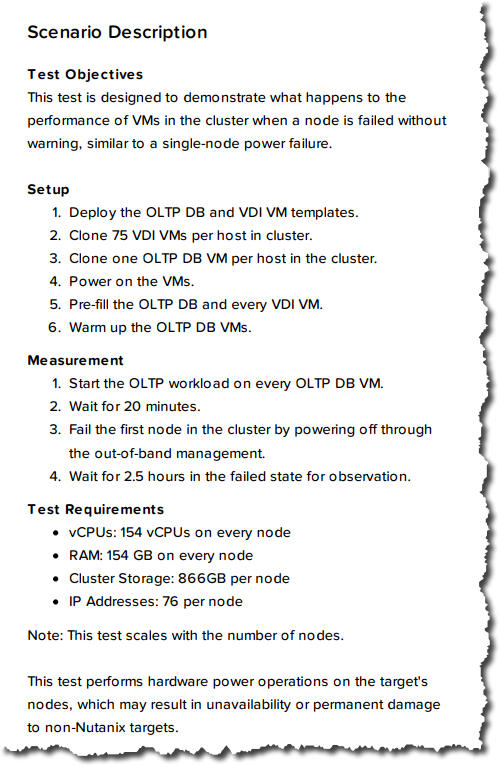

Let’s start by reviewing what the description of the X-ray Extended node failure scenario.

I really like that X-ray has a scenario which shows a simulated node failure as this is bound to happen regardless of the platform you choose, and with hyperconverged platforms the impact of a node failure is arguably higher than traditional 3-tier as the nodes contain some data which needs to be recovered.

As such, it is critical before choosing a HCI platform to understand how it behaves in a failure scenario which is exactly what this scenario demonstrates.

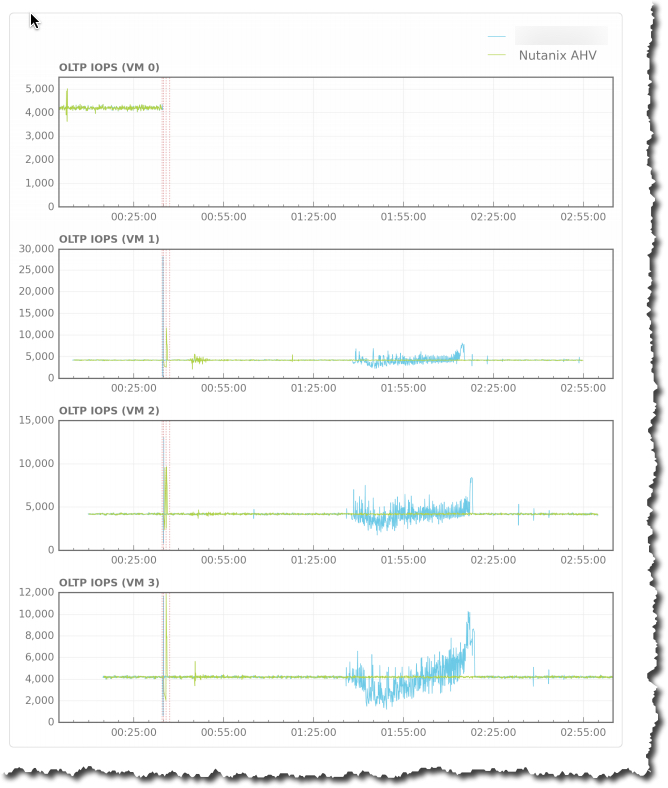

Here we can see the impact on the performance of the surviving VMs following the power being disconnected via the out of band management interface.

The Nutanix AOS/AHV platform continues to run at a very steady rate, virtually without impact to the VMs. On the other hand we see that after 1 hour the other platform has a high impact with significant degradation.

This clearly shows the Acropolis Distributed Storage Fabric (ADSF) to be a superior platform from a resiliency perspective, which should be a primary consideration when choosing a platform for any production environment.

Back in 2014, I highlighted the Problems with RAID and Object Based Storage for data protection and in a follow up post I discussed how Nutanix Acropolis Distributed Storage Fabric (ADSF) compares with traditional SAN/NAS RAID and hyper-converged solutions using Object storage for data protection.

The above results clearly demonstrate the problems I discussed back in 2014 are still applicable to even the most recent versions of a leading hypervisor and SDS platform. This is because the problem is the underlying architecture and bolting on new features is at best masking the constraints of the original architectural decision which has proven to be significantly flawed.

This scenario clearly demonstrates the criticality of looking beyond peak performance numbers and conducting a thorough evaluation of a platform prior to purchase as well as comprehensive operational verification prior to moving any platform into production.

Related Articles:

Nutanix X-Ray Benchmarking tool Part 1 – Introduction

Nutanix X-Ray Benchmarking tool Part 2 -Snapshot Impact Scenario