Problem:

Remote office / Branch Office commonly referred to as “ROBO” and dark sites (i.e.: offices without local support staff and/or network connectivity to a central datacenter) are notoriously difficult to design, deploy and manage.

Why have infrastructure at ROBO?

The reason customers have infrastructure at ROBO and/or Dark Sites is because these sites require services which cannot be provided centrally due to any number of constraints such as WAN bandwidth/latency/availability or, more frequently, security constraints.

Challenges:

Infrastructure at ROBO and/or dark sites need to be functional, highly available and performant without complexity. The problem is as the functional requirements of the ROBO/dark Sites are typically not dissimilar to the infrastructure in the datacenter/s, the complexity of these sites can be equal to the primary datacenter if not greater due to the reduced budgets for ROBOs.

This means in many cases the same management stack needs to be designed on a smaller scale, deployed and somehow managed at these remote/secure sites with minimal to no I.T presence onsite.

Alternatively, Management may be ran centrally but this can have its own challenges especially when WAN links are high latency/low bandwidth or unreliable/offline.

Typical ROBO deployment requirements.

Typical requirements are in many cases not dis-similar to those of the SMB or enterprise and include things like High Availability (HA) for VMs, so a minimum of 2 nodes and some shared storage. Customers also want to ensure ROBO sites can be centrally managed without deployment of complex tooling at each site.

ROBO and Dark Sites are also typically deployed because in the event of WAN connectivity loss, it is critical for the site to continue to function. As a result, it is also critical for the infrastructure to gracefully handle failures.

So let’s summarise typical ROBO requirements:

- VM High Availability

- Shared Storage

- Be fully functional when WAN/MAN is down

- Low/no touch from I.T

- Backup/Recovery

- Disaster Recovery

Solution:

Nutanix Xtreme Computing Platform (XCP) including PRISM and Acropolis Hypervisor (AHV).

Now let’s dive into with XCP + PRISM + AHV is a great solution for ROBO.

A) Native Cross Hypervisor & Cloud Backup/Recovery & DR

Backup/Recovery and DR are not easy things to achieve or manage for ROBO deployments. Luckily these capabilities are built-in to Nutanix XCP. This includes the ability to take point in time application consistent snapshots and replicate those to local/remote XCP clusters & Cloud Providers (AWS/Azure). These snapshots can be considered backups once replicated to a 2nd location (ideally offsite) as well as be kept locally on primary storage for fast recovery.

ROBO VMs replicated to remote/central XCP deployments can be restored onto either ESXi or Hyper-V via the App Mobility Fabric (AMF) so running AHV at the ROBO has no impact on the ability to recover centrally if required.

This is just another way Nutanix is ensuring customer choice and proves the hypervisor is well and truely a commodity.

In addition XCP supports integration with the market leader in data protection, Commvault.

B) Built in Highly Available, Distributed Management and Monitoring

When running AHV, all XCP, PRISM and AHV management, monitoring and even the HTML 5 GUI are built in. The management stack requires no design, sizing, installation , scaling or 3rd party backend database products such as SQL/Oracle.

For those of you familiar with the VMware stack, XCP + AHV provides capabilities provided by vCenter, vCenter Heartbeat, vRealize Operations Manager, Web Client, vSphere Data Protection, vSphere Replication. And it does this in a highly available and distributed manner.

This means, in the event of a node failure, the management layer does not go down. If the Acropolis Master node goes down, the Master roles are simply taken over by an Acropolis Slave within the cluster.

As a result, the ROBO deployment management layer is self healing which dramatically reduces the complexity and and all but removes the requirement for onsite attendance by I.T.

C) Scalability and Flexibility

XCP with AHV ensures than even when ROBO deployments need to scale to meet compute or storage requirements, the platform does not need to be re-architected, engineered or optimised.

Adding a node is as simple as plugging it in, turning it on and the cluster can be expanded not disruptively via PRISM (locally or remotely) in just a few clicks.

When the equipment becomes end of life, XCP also allows nodes to be non-disruptively removed from clusters and new nodes added, which means after the initial deployment, ongoing hardware replacements can be done without major redesign/reconfiguration of the environment.

In fact, deployment of new nodes can be done by people onsite with minimal I.T knowledge and experience.

D) Built-in One Click Maintenance, Upgrades for the entire stack.

XCP supports one-click, non-disruptive upgrades of:

- Acropolis Base Software (NDSF layer),

- Hypervisor (agnostic)

- Firmware

- BIOS

This means there is no need for onsite I.T staff to perform these upgrades and XCP eliminates potential human error by fully automating the process. All upgrades are performed one node at a time and only started if the cluster is in a resilient state to ensure maximum uptime. Once one node is upgraded, it is validated as being successful (Similar to a Power on self test or POST) before the next node proceeds. In the event an upgrade fails, the cluster will remain online as I have described in this post.

These upgrades can also be done centrally via PRISM Central.

E) Full Self Healing Capabilities

As I have already touched on, XCP + AHV is a fully self healing platform. From the Storage (NDSF) layer to the virtualization layer (AHV) through to management (PRISM) the platform can fully self heal without any intevenston from I.T admins.

With Nutanix XCP you do not need expensive hardware support contracts or to worry about potential subsequent failures, because the system self heals and does not depend on hardware replacement as I have described in hardware support contracts & why 24×7 4 hour onsite should no longer be required.

Anyone who has ever managed a multi-site environment knows how much effort hardware replacement is, as well as the fact that replacements must be done in a timely manner which can delay other critical work. This is why Nutanix XCP is designed to be distributed and self healing as we want to reduce the workload for sysadmins.

F) Ease of Deployment

All of the above features and functionality can be quickly/easily deployed from out of the box to fully operational ready to run VMs in just minutes.

The Management/Monitoring solutions do not require detailed design (sizing/configuration) as they are all built in and they scale as nodes are added.

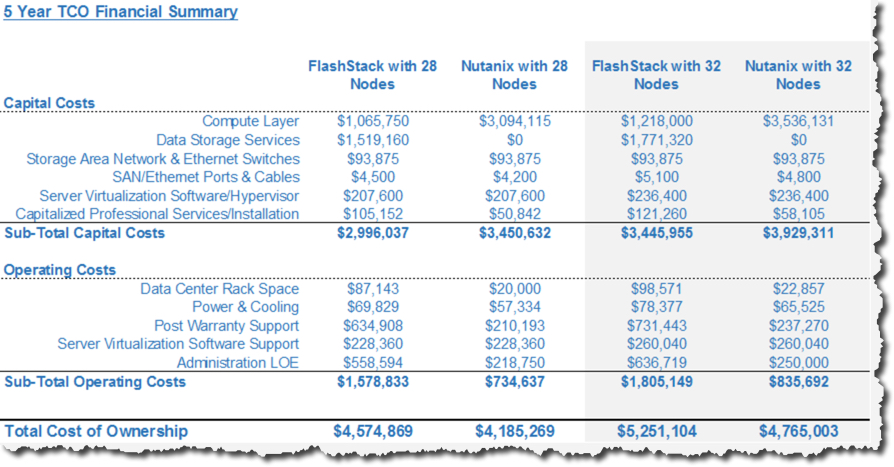

G) Reduced Total Cost of Ownership (TCO)

When it comes down to it, ROBO deployments can be critical to the success of a company and trying to do things “cheaper” rarely ends up actually being cheaper. Nutanix XCP may not be the cheapest (CAPEX) but we will be the lowest TCO which is after all what matters.

If you’re a sysadmin and you don’t think you can get any more efficient after reading the above than what you’re doing today, its because you already run XCP + AHV 🙂

In all seriousness, sysadmin’s should be innovating and providing value back to the business. If they are instead spending any significant time “keeping the lights on” for ROBO deployments then their valuable time is not being well utilised.

Summary:

Nutanix XCP + AHV provides all the capabilities required for typical ROBO deployments while reducing the initial implementation and ongoing operational cost/complexity.

With Acropolis Operating System 4.6 and the cross hypervisor backup/recovery/DR capabilities thanks to the App Mobility Fabric (AMF), there is no need to be concerned about the underlying hypervisor as it has become a commodity.

AHV performance and availability is on par if not better than other hypervisors on the market as is clear from several points we have discussed.

Related Articles:

- Why Nutanix Acropolis hypervisor (AHV) is the next generation hypervisor

- Hardware support contracts & why 24×7 4 hour onsite should no longer be required.