A common question is how do I upload an ISO or Virtual machine image to the Acropolis Hypervisor, well in NOS 4.5 this task just got radically simpler.

The below shows the “Home” screen in PRISM UI. As we can see in the top left we are running the Acropolis Hypervisor (AHV) version 20150616.

By clicking the gear wheel at the top right, we can then access the “Image Configuration” menu.

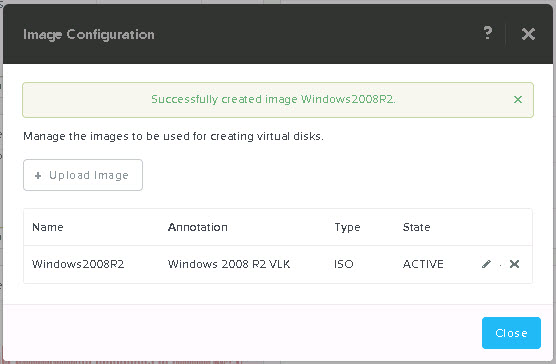

The “Image configuration” menu is a quick and easy way to upload ISOs and Virtual Machine images to Acropolis.

Below we can see its a very simple process, simply give the Image a name along with annotation, select from a drop-down list the Image type, being ISO or Disk (RAW format, .img) and then select the image source, either from a URL or by uploading a file from your machine.

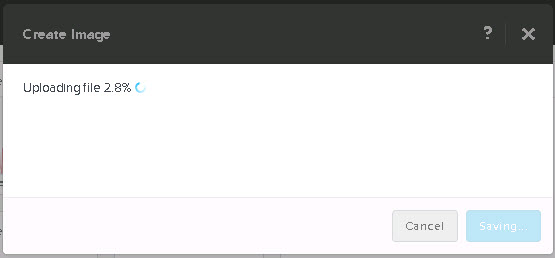

Once you have selected your ISO or Disk, hit save and the image will be uploaded and the status of the upload will be shown as per the below:

Once its completed, PRISM shows the following Summary:

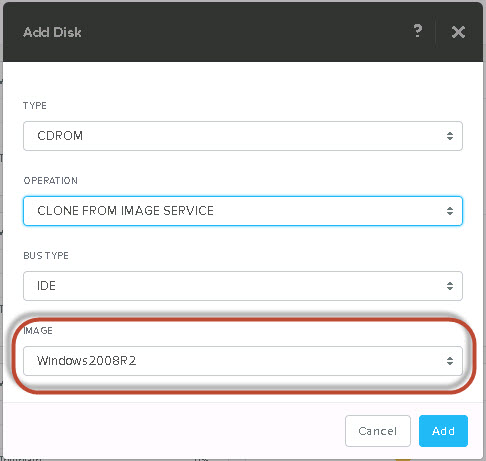

Now when you create a new VM, you will be able to select “Clone from Image Service” and select the ISO Image from a drop-down list. How simple is that!

Simple as that! Now you can boot your VM and start using the ISO. The same process can also be used to upload VM disk images.