Recently there has been some back and forth on social media (Twitter & Blogs) around the following article published on the Exchange Team Blog:

Troubleshooting High CPU utilization issues in Exchange 2013

The article discusses several topics including Common Configuration Issues, Over-sizing and several performance metrics which can help Exchange administrators identify and hopefully resolve performance problems.

As a result of some of the recent updated recommendations, the Exchange 2013 Server Role Requirements calculator has been updated to reflect the newer recommendations.

The calculator can be found here: Exchange 2013 Server Role Requirements Calculator.

On key recommendation from Microsoft is the maximum Exchange Mailbox (or Multi-Role) Server sizing now being:

| Recommended Maximum CPU Core Count |

24

|

| Recommended Maximum Memory |

96 GB

|

In reply to the above, VMware have released the following article.

A Stronger Case For Virtualizing Exchange Server 2013 – Think “Performance”

Personally, I don’t think the article will (or has) had the effect VMware and Virtualization architects/admins would have liked due to its negativity. As such I am going to highlight the important points in this post.

In response to VMware’s article, a well known member of the Exchange community and MVP, Tony Redmond wrote the below article.

VMware tells Microsoft that they don’t know anything about Exchange 2013 performance

In this case, even as a strong Virtualization (and VMware) evangelist, I think Tony has some valid points.

Tony mentions the following regarding the sizing tool:

Most experienced people take the output from any general-purpose sizing tool and cast a cold eye over its recommendations to put them into context with the operational and business requirements for a deployment. In other words, the recommendations are adjusted. And yes, sometimes those recommendations are adjusted to make sure that Exchange 2013 works well when deployed on virtualized servers, either Hyper-V or VMware.

I totally agree! No matter if the sizing tool was recommending more CPUs or not, experienced architects should be making decisions based on many factors, the most important being their real world experience and using the calculator as a secondary (or tertiary) input and adjusting for the underlying infrastructure, regardless of it being physical or virtualized on one of many viable hypervisors.

I also agree with the following point:

Third, wouldn’t it be better use of VMware’s time to publish well-argued and pertinent observations on how you can take the output of Microsoft sizing tools and adjust them for their platform

VMware can be considered the experts in their own technology and they should as Tony suggested publish and continue to update documentation on how best to successfully deploy Exchange on vSphere. There is no advantage in an “I told you so” style blog posts when Microsoft have actually published recommendations which to the one point of VMware’s blog that I agree with, Strengthens the case for Virtualization of Exchange.

For companies like Nutanix who have numerous Virtualization experts across multiple hypervisors including vSphere, Hyper-V and Acropolis (which is fully supported for Windows 2012 and Exchange) we should also post reference architectures and best practice guides on how to deploy Exchange successfully.

Tony also commented in reply to VMware’s post which was not favourable towards Multi-role deployments:

Combined role means a multi-role server, which I think that every reasonable expert working with Exchange has concluded is the only way to go because it increases server utilization and improves the overall resilience of any deployment. But it’s a bad thing in VMware’s world, which is a pity for them because Exchange 2016 only supports multi-role servers, so I guess they will just have to get used to that fact.

VMware’s point here is scaling out (rather than up) means in general better overall consolidation and performance. Now to be fair this is true but one cannot apply a blanket rule for all applications.

Deploying Exchange 2013 in a Multi-Role configuration is a good way to simplify as well as ensure consistent performance and resiliency in the event of a server failure as all servers can service all roles.

As Exchange is generally considered a Business Critical Application, Virtualization architects like myself should consider the recommendations for the application, the critically of the solution to the customer and make an informed recommendation for its deployment. For an app like Exchange, I think its fair to say the MS Exchange team have solid justification to recommend MSR deployments.

The impact of less scale out (i.e.: MBX + CAS) is simply a design consideration, and not an uncommon one so this in my opinion is not an issue for virtual deployments.

Key Take Aways:

1. Ensure you size within Microsoft’s revised vCPU / vRAM recommended configuration maximums (24 vCPUs / 96GB RAM)

2. When sizing Exchange on any Hypervisor, start small and scale up vCPU/vRAM requirements as required to avoid oversizing. (This is a major advantage of virtualization, so don’t be afraid to use it!)

3. Multi-Role Deployments are perfectly fine for Virtual environments. Exchange is a Business Critical Application, so treat it as such in your design phase.

Final thoughts:

With the ever increasing number of cores per socket, the case to virtualize Exchange is strengthened when considering 12-18c CPUs are not uncommon these days. As such an 18vCPU / 96GB RAM Exchange 2013 MSR VM could be virtualized with zero CPU/RAM overcommitment (as is generally recommended) while running other VMs on the same host.

This helps to remove silos within the datacenter as well as driving up utilization (without creating resource contention) which equates to lower cost/power/cooling/maintenance, the list goes on.

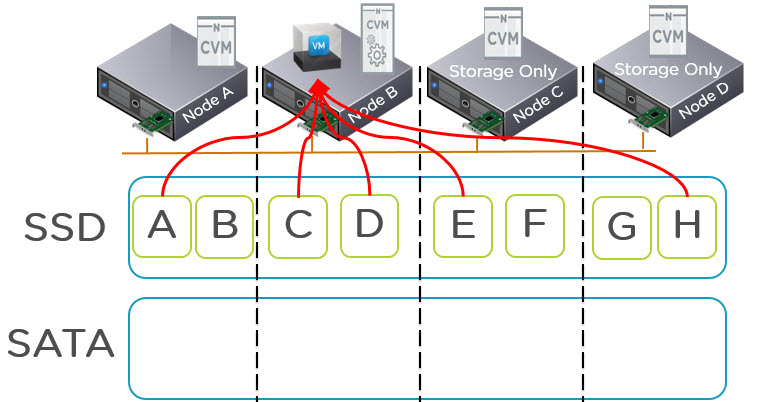

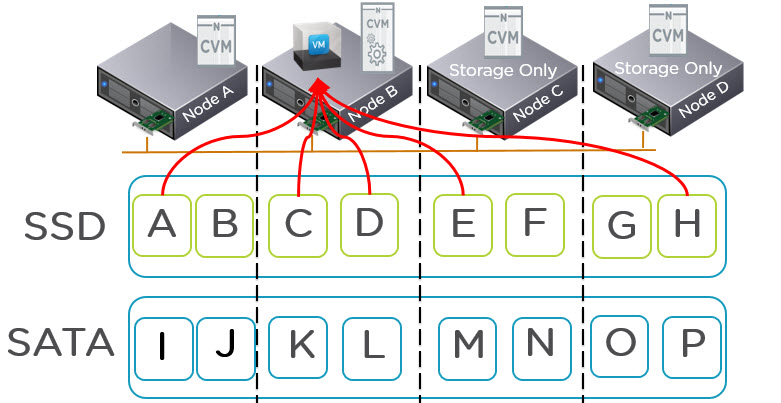

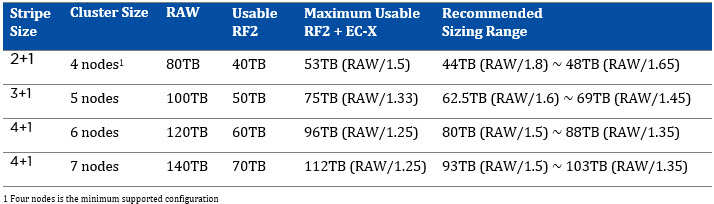

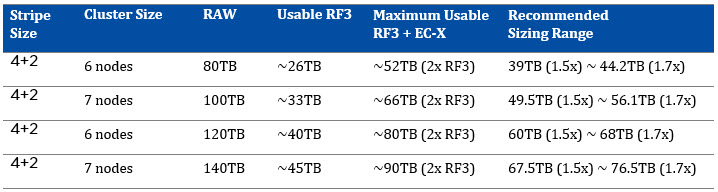

While Virtualization does add some complexity & cost, I would argue with newer technologies such as Nutanix which replace complex and costly SAN/NAS storage with simple to deploy and manage scale out storage these (storage) challenges are soon going to be things of the past.

Nutanix also allows customers to choose a premium feature rich hypervisor such as ESXi, or lower cost/feature solutions such as Hyper-V or Acropolis which allows customers to work with what they are comfortable with.

Acropolis for example is fully supported by Microsoft (see Microsoft SVVP) and can be deployed in <30mins with just a few clicks and its free with Nutanix, so the cost and complexity arguments against virtualization of Exchange just went out the window and Exchange would have all the benefits of things like Virtual Machine High Availability, Migration (vMotion),

Please to hear everyone’s thoughts.